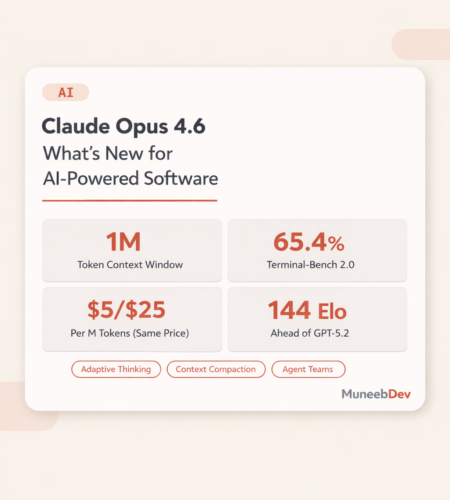

TL;DR: Anthropic released Claude Opus 4.6 on February 5, 2026. It introduces a 1M token context window, adaptive thinking with four effort levels, context compaction for infinite conversations, agent teams for parallel coding, and 128K output tokens — all at the same $5/$25 per million token pricing as Opus 4.5. It leads benchmarks in agentic coding (65.4% Terminal-Bench 2.0), knowledge work (1,606 Elo on GDPval-AA, 144 points ahead of GPT-5.2), and novel problem-solving (68.8% ARC-AGI-2, nearly double Opus 4.5). If you’re building AI-powered software for clients, this changes how you architect agents, handle large codebases, and control costs.

Anthropic didn’t just update Claude. They rebuilt what their flagship model can do.

Claude Opus 4.6 shipped on February 5, 2026 — the same day OpenAI dropped GPT-5.3 Codex, twenty minutes later. The timing tells you everything about where the AI industry is right now. But the features tell you more.

If you’re a business owner evaluating AI tools for your software, or a decision-maker trying to understand which model actually delivers ROI, here’s what matters and what doesn’t.

What Actually Changed in Opus 4.6

1M Token Context Window

The biggest practical upgrade. Opus 4.6 can now process roughly 750,000 words in a single prompt — an entire medium-sized codebase, a full legal discovery set, or 15–20 complete technical documents at once.

On MRCR v2, a benchmark that tests whether a model can find specific facts buried in massive text, Opus 4.6 scores 76%. The previous best Claude model (Sonnet 4.5) scored 18.5%. That’s not incremental — it’s a different capability class.

For businesses, this means:

- Feed your entire codebase into a single session for architecture reviews

- Process complete contract packages without splitting documents

- Analyze months of customer support transcripts in one pass

Cost note: Standard pricing applies up to 200K tokens. Beyond that, premium pricing kicks in at $10/$37.50 per million tokens for input/output. Plan your context strategy accordingly.

Adaptive Thinking

Previously, developers had a binary choice: extended thinking on or off. Now Claude decides dynamically when deeper reasoning helps.

Four effort levels give you control over the intelligence-speed-cost tradeoff:

| Effort Level | Best For | Cost Impact |

|---|---|---|

| Low | Classification, simple extraction, formatting | Lowest — skips thinking entirely for simple tasks |

| Medium | Standard Q&A, summarization, moderate code generation | Balanced |

| High (default) | Complex code generation, multi-step analysis, debugging | Standard — Claude thinks when useful |

| Max | Critical debugging, legal analysis, security reviews, hard benchmarks | Highest — forces deep reasoning on every request |

This is a genuine cost optimization lever. If you’re running agentic workflows that make dozens of API calls, dropping non-critical steps to medium or low while keeping complex reasoning at high or max can cut your bill significantly — thinking tokens are billed as output tokens at $25 per million.

response = client.messages.create(

model="claude-opus-4-6",

max_tokens=16000,

thinking={"type": "adaptive"},

# effort defaults to "high" — override per task

messages=[{"role": "user", "content": "Analyze this codebase for security vulnerabilities..."}],

)

Context Compaction

This solves a problem every developer building AI agents has hit: context windows fill up, performance degrades, and you lose continuity.

Context compaction automatically detects when a conversation approaches the token limit and summarizes older portions into a compressed state. The essentials are preserved; the noise is dropped. Your agent can theoretically run indefinitely without hitting the wall that’s plagued long-running AI tasks.

For businesses running AI-powered customer support, code review pipelines, or research agents — this is the feature that makes “always-on” AI assistants actually viable.

Agent Teams

This is the headline feature for Claude Code users. Instead of one AI agent working through tasks sequentially, Opus 4.6 lets you spin up multiple fully independent Claude instances that work in parallel.

One agent leads and coordinates. Teammates handle execution. Each gets its own context window. They communicate through what Anthropic calls a “Mailbox Protocol.”

In practice: one agent reviews authentication code while another examines database queries and a third analyzes API endpoints — simultaneously. Anthropic demonstrated this by having agent teams build a working C compiler from scratch: 100,000 lines of code that boots Linux on three CPU architectures.

Cost warning: Each agent has its own context window. Multi-agent workflows burn tokens fast. Reserve this for complex tasks where the speed gain justifies the cost.

128K Output Tokens

Double the previous 64K limit. In practical terms, that’s roughly 96,000 words of output in a single response — enough for complete documentation, full codebase migrations, or comprehensive technical reports without breaking them into multiple requests.

Fast Mode

For latency-sensitive work, fast mode delivers 2.5x faster output token generation at premium pricing ($30/$150 per million tokens). Same intelligence, faster inference. Worth it for real-time coding sessions or interactive debugging where waiting kills flow.

Benchmark Results That Matter for Business

Numbers without context are meaningless. Here’s what the benchmarks actually tell you about production value:

| Benchmark | Opus 4.6 | GPT-5.2 | What It Measures |

|---|---|---|---|

| GDPval-AA | 1,606 Elo | 1,462 Elo | Real-world knowledge work across 44 professional occupations (finance, legal, operations) |

| Terminal-Bench 2.0 | 65.4% | 64.7% | Agentic coding in the terminal — autonomous debugging, multi-file code reviews |

| SWE-bench Verified | 80.8% | 72.1% | Resolving real GitHub issues in production codebases |

| OSWorld | 72.7% | — | Agentic computer use — navigating applications, filling forms, automating workflows |

| BrowseComp | 84.0% | — | Finding hard-to-locate information across the web |

| ARC-AGI-2 | 68.8% | 54.2% | Novel problem-solving that resists memorization |

| BigLaw Bench | 90.2% | — | Legal reasoning and compliance tasks |

The GDPval-AA result is the one business owners should pay attention to. A 144-point Elo gap over GPT-5.2 means Opus 4.6 produces better results roughly 70% of the time on economically valuable tasks — the kind of work that costs you money when AI gets it wrong.

Pricing: Same Cost, More Capability

Anthropic held pricing steady. That’s the story here.

| Tier | Input | Output |

|---|---|---|

| Standard (up to 200K context) | $5 / M tokens | $25 / M tokens |

| Long context (200K–1M) | $10 / M tokens | $37.50 / M tokens |

| Fast mode (up to 200K) | $30 / M tokens | $150 / M tokens |

| Prompt caching (write) | $6.25 / M tokens | — |

| Prompt caching (read) | $0.50 / M tokens | — |

For comparison, GPT-5.2 runs at $2/$10 per million tokens — cheaper per token, but the benchmark gap on knowledge work tasks means you may need more retries and manual correction. The real cost is total cost of completion, not price per token.

Cost optimization strategy:

- Use adaptive thinking with

lowormediumeffort for simple tasks - Reserve

highandmaxfor complex reasoning - Use prompt caching aggressively — read cache at $0.50/M is 10x cheaper than fresh input

- Route simple tasks to Sonnet 4.6 ($3/$15) and reserve Opus for hard problems

- Use context compaction for long-running agents instead of restarting sessions

Breaking Changes: What to Fix Before Upgrading

If you’re already building with Claude’s API, Opus 4.6 introduces changes that will break existing code:

Prefilling disabled. Assistant message prefilling — where you seed Claude’s response with a partial message — now returns a 400 error. Anthropic removed this citing safety concerns. Migrate to structured outputs or system prompt instructions.

Thinking API deprecated. thinking: {type: "enabled", budget_tokens: N} still works but is deprecated. Migrate to thinking: {type: "adaptive"} with the effort parameter.

Output format moved. output_format is now output_config.format. Old parameter still works but will be removed.

JSON escaping differences. Opus 4.6 may produce slightly different JSON string escaping in tool call arguments. Standard JSON parsers handle this automatically, but if you’re parsing tool call input as raw strings, test your logic.

Opus 4.6 vs GPT-5.4 vs Gemini 3 Pro: Which One Should You Use?

The honest answer: it depends on your workload.

| Use Case | Best Choice | Why |

|---|---|---|

| Agentic coding & long-running dev tasks | Opus 4.6 | Highest Terminal-Bench score, agent teams, context compaction |

| Cost-sensitive high-volume API calls | GPT-5.4 | $2.50/$15 pricing, tool search reduces token waste |

| Multimodal processing (video, images + text) | Gemini 3 Pro | Native 2M token context, strong visual reasoning |

| Legal, finance, compliance work | Opus 4.6 | 90.2% BigLaw Bench, 1,606 Elo GDPval-AA |

| Computer use / browser automation | GPT-5.4 | 75% OSWorld-Verified, native computer use |

| Budget-constrained projects | Sonnet 4.6 | $3/$15, strong coding performance, 1M context |

The smartest teams aren’t picking one model. They’re routing tasks to whichever model handles them best. Simple extraction goes to Sonnet. Deep legal analysis goes to Opus. High-volume classification goes to GPT-5.4 Mini. Build your architecture for multi-model routing from day one.

What This Means If You’re Building AI-Powered Software

Three practical takeaways:

1. Long-running AI agents are now viable. Context compaction plus adaptive thinking means agents can operate across extended sessions without performance degradation or context window crashes. If you’ve been holding off on AI agent features for your product, the infrastructure just caught up.

2. Cost control is now a first-class API feature. Effort levels, prompt caching, and model routing (Opus for hard tasks, Sonnet for simple ones) give you genuine levers to control spend. The days of “throw everything at the biggest model and hope” are over.

3. The AI coding market just got more competitive. Claude Code hitting $1 billion in annualized revenue, agent teams shipping as a preview, and 44% of enterprises now using Anthropic in production — if your business builds software, you’re already competing with teams using these tools. The question isn’t whether to adopt AI-assisted development; it’s how fast you can integrate it effectively.

Getting Started

import anthropic

client = anthropic.Anthropic()

# Basic Opus 4.6 call with adaptive thinking

response = client.messages.create(

model="claude-opus-4-6",

max_tokens=8192,

thinking={"type": "adaptive"},

messages=[

{"role": "user", "content": "Review this codebase for security vulnerabilities and suggest fixes."}

],

)

print(response.content[0].text)

Opus 4.6 is available now on claude.ai, the Anthropic API, AWS Bedrock, Microsoft Foundry (Azure), and Google Cloud Vertex AI.

FAQ

Is Opus 4.6 worth the upgrade from Opus 4.5? Yes. Same pricing, substantially better performance across every benchmark that matters for production work. The 1M context window alone is worth the migration.

Should I switch from GPT-5.2/5.4 to Opus 4.6? Depends on your use case. For agentic coding, legal/finance work, and long-context analysis, Opus 4.6 has clear advantages. For budget-sensitive high-volume calls and computer use agents, GPT-5.4 may be more cost-effective. Test both on your actual workloads.

What about the writing quality controversy? Some users report Opus 4.6 produces flatter prose than Opus 4.5 for creative fiction. For coding, reasoning, enterprise workflows, and technical writing, 4.6 is unambiguously better. If creative writing is your primary use case, test before committing.

Can I use the 1M context window on all plans? The 1M context window is available across Max, Team, and Enterprise plans by default. API users can access it at premium pricing ($10/$37.50 per million tokens beyond 200K context).

How do agent teams work with existing CI/CD pipelines? Agent teams are a Claude Code feature (research preview). Each agent operates via git worktrees, so they integrate naturally with existing version control workflows. The lead agent coordinates merges. It’s best suited for read-heavy tasks like codebase reviews rather than parallel write operations.

Comments