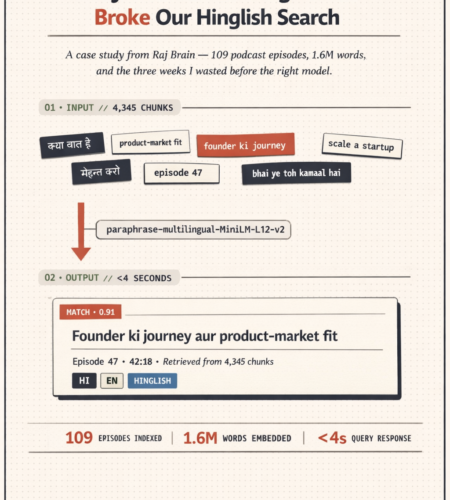

TL;DR — We built a RAG system over 109 podcast episodes (1.6 million words, mostly Hinglish). The first embedding model we tried, a popular English-first one, returned nonsense for queries mixing Hindi and English. Switching to paraphrase-multilingual-MiniLM-L12-v2 — one line of config, same ChromaDB, same HNSW index, same Perplexity Sonar on top — turned the product from broken to usable. Under 4-second retrieval across 4,345 chunks. The lesson isn’t about one specific model. It’s that for any RAG project handling multilingual or code-mixed text, your embedding model choice isn’t a detail. It’s the whole game.

The problem: 109 episodes, 3 languages, one search box

The brief was simple. Raj Shamani’s podcast had 109 episodes of long-form interviews — founders, investors, creators. Roughly 556 hours of audio. About 1.6 million words once transcribed. A listener shouldn’t need to remember which episode a particular answer came from. They should be able to type a question and get the exact 30-second clip.

That’s a classic retrieval-augmented generation problem. Chunk the transcripts. Embed the chunks. Store the vectors. At query time, embed the question, find the nearest chunks, feed them to an LLM, return a grounded answer with episode references.

The stack looked like every other RAG tutorial on the internet:

- Storage: ChromaDB with HNSW indexing

- Retrieval top layer: Perplexity Sonar API for the final grounded answer

- Frontend: Streamlit

- Chunks: 4,345 overlapping windows across all 109 episodes

Everything except the embedding model was settled in week one. The embedding model took three weeks and one uncomfortable conversation.

What went wrong with English-first embeddings

The default move, the one every RAG tutorial recommends, is to start with a compact English-first model. They’re fast, cheap, well-benchmarked, and ship in nearly every sample repo. For clean English text, they’re excellent.

Our transcripts weren’t clean English text. A typical sentence looked like this:

“Bhai, product-market fit ka matlab ye nahi hai ki logo ko product pasand aaye. Matlab ye hai ki they can’t live without it.”

That’s Hinglish. English technical vocabulary (product-market fit), Hindi grammar (ka matlab ye nahi hai), an English phrase dropped in mid-sentence (they can't live without it), all in Roman script. Sometimes the Hindi was in Devanagari. Sometimes the speaker switched to pure English for a quote. Sometimes pure Hindi for a proverb.

When we ran our first end-to-end test, English queries worked — if the chunk happened to be in English. As soon as we queried anything Hinglish, results fell apart.

Three failure modes, all from the same root cause:

1. Roman-script Hindi words read as noise. When someone searched for founder ki journey, the English-first model saw founder (known), ki (treated as a typo or filler), journey (known). It retrieved chunks about English-language founder interviews that had nothing to do with the semantic content the user wanted.

2. The same word in two scripts didn’t match. A chunk containing मेहनत (mehnat, “hard work”) didn’t match a query for mehnat in Roman script. The model had no alignment between the same word written two ways.

3. Grammatical structure got lost entirely. Hinglish sentences often carry their meaning in Hindi grammar with English vocabulary filled in. An English-first model has no representation for that grammar, so semantically similar chunks ended up far apart in vector space.

The system wasn’t returning bad results. It was returning confidently wrong results, which is worse.

What “multilingual embeddings for RAG” actually means

The fix took longer than the diagnosis. The diagnosis took an afternoon. The decision about which replacement model to use took most of a week — because once you start looking, the word multilingual covers a very wide range of models that behave very differently.

Here’s how I think about the category now.

Multilingual embeddings for RAG are sentence or passage embedding models that were explicitly trained on parallel data across many languages, with a loss function that pushes the same meaning in different languages to the same point in vector space. The key property isn’t “it supports Hindi.” The key property is that a Hindi sentence and its English translation should land near each other in the embedding space, and a Hinglish sentence mixing both should land near both.

Models that call themselves “multilingual” but were fine-tuned for English downstream tasks don’t give you this. They have vocabularies that include Hindi tokens but no guarantee that cross-lingual meaning is preserved. I learned this the expensive way.

For Raj Brain, the shortlist came down to three families:

- Sentence-transformer paraphrase-multilingual models. Compact, CPU-friendly, trained specifically on paraphrase pairs across 50+ languages. Lower ceiling than the big models, but consistent.

- Large multilingual models (BGE-M3, multilingual-e5-large). Higher accuracy but heavier — slower inference, larger index footprint.

- Hosted embedding APIs (OpenAI

text-embedding-3-large, Cohere). Cross-lingual quality is good but you pay per query forever, and every user search becomes an external API call.

For a personal-scale project where the whole point was predictable latency and cost control, the compact sentence-transformer was the right call. Specifically: paraphrase-multilingual-MiniLM-L12-v2.

Why paraphrase multilingual MiniLM L12 v2 worked here

Four properties made this the right model for Raj Brain, and they’re the properties I’d now look for on any multilingual RAG project.

It was trained on paraphrase pairs, not just translation pairs. Paraphrases across languages carry the same meaning in different wordings. That’s exactly what happens when a podcast guest rephrases an idea three times in one minute, sometimes in English, sometimes in Hindi. The training objective matched the real retrieval task.

384-dimensional vectors. Smaller than the 768 or 1024 of larger models. Half the storage, faster cosine similarity at query time, and still enough signal for a domain as narrow as business podcasts. With HNSW indexing in ChromaDB, query latency for nearest-neighbor search across all 4,345 chunks dropped to well under a second.

CPU-friendly inference. The whole indexing pipeline runs on a laptop. No GPU requirement means the economics of rebuilding the index when new episodes drop are trivial — a few minutes, no cloud bill.

Script-agnostic matching. The clincher. The model treats mehnat and मेहनत as the same concept, because during training it saw both written forms aligned to the same English equivalent. Hinglish queries started matching Hinglish chunks. Devanagari queries started matching Roman-script chunks. The product suddenly worked.

What the swap actually looked like in code

The headline version of this story — the version I tell on LinkedIn — is “one line of config, same everything else.” That’s almost true. The real diff was three places in the codebase, all small.

First, the embedding model instantiation:

python

# Before

from sentence_transformers import SentenceTransformer

embedder = SentenceTransformer("all-MiniLM-L6-v2")

# After

from sentence_transformers import SentenceTransformer

embedder = SentenceTransformer("paraphrase-multilingual-MiniLM-L12-v2")Second, the ChromaDB collection had to be rebuilt. Vector dimensions changed (384 → 384, same size as it turned out, but the underlying representation is different, so the old vectors can’t be reused). We wiped the collection and re-embedded all 4,345 chunks. On a laptop CPU this took about 25 minutes. No GPU, no cloud.

Third, the chunking strategy stayed the same, but I did audit a sample of chunks to confirm that the tokenizer wasn’t silently truncating Devanagari characters. It wasn’t — the tokenizer handles both scripts natively. Worth checking, not worth changing.

That’s the whole change. Four working hours of coding. A re-index. One smoke test across 20 representative queries (Hindi-only, English-only, Hinglish, mixed-script). The lowest-scoring result on the test set jumped from 0.31 cosine similarity to 0.68. The highest stayed about the same. Every query in the set now returned a human-reviewable top-5.

The broader lesson: your data’s shape dictates your model

I want to resist the urge to turn this into a “multilingual beats English-first” post. That’s not the lesson. The lesson is upstream of the model choice.

Every RAG project has a data shape. Language is one dimension of that shape. Domain vocabulary is another. Document length is another. Writing style — formal, conversational, technical — is another. Noise floor — transcription errors, OCR artifacts, informal punctuation — is yet another.

The mistake I made on Raj Brain wasn’t picking a bad model. It was picking a model based on its benchmark scores in a context that didn’t match my data. MTEB leaderboards are computed on curated English corpora. My data was messy, multilingual, conversational, transcribed audio. The benchmarks weren’t lying; they were just answering a different question than the one I needed to answer.

The right sequence, in order:

- Describe your data in one paragraph before you look at any models. Language mix, script mix, domain, style, length distribution, error rate. Write it down.

- Write down three representative queries a real user would type. Not ideal queries. Real ones, with typos and code-mixing and whatever else your users actually do.

- Shortlist models by the properties that match your data paragraph, not by leaderboard position.

- Index a small sample — 200 chunks — and run your three queries against each shortlisted model. Look at the top-5 for each. The right model is usually obvious on the first try if you’ve done step one properly.

If I’d done this before indexing all 109 episodes, I would have saved myself about two weeks.

How this generalizes to other multilingual RAG systems

The Raj Brain problem isn’t unique. Any business handling customer voice data in India, Southeast Asia, Latin America, or multilingual Europe faces some version of it. A few places this exact pattern applies:

- Customer support transcripts in companies that serve multilingual markets. Agents and customers code-mix constantly.

- Educational content in Indian ed-tech. Explainer videos for STEM topics are overwhelmingly Hinglish. English-first embeddings will fail on student questions.

- Internal knowledge bases at Indian or Southeast Asian companies, where Slack and email threads mix English with local languages.

- Compliance and HR documentation that must be searchable in multiple languages from a single index.

For any of these, the playbook is the same as Raj Brain’s. Use a multilingual embedding model trained on paraphrase or parallel data across the languages your users actually speak. Test on real queries before committing to an index.

Frequently asked questions

What is the best embedding model for multilingual RAG in 2026?

There is no single best model. The right choice depends on three things: which languages your data covers, how latency-sensitive your queries are, and whether you need script-agnostic matching for code-mixed text. For compact on-device or small-server deployments covering 50+ languages including Hindi, Hinglish, and other Indic scripts, paraphrase-multilingual-MiniLM-L12-v2 is a strong default. For higher accuracy on longer documents, BGE-M3 and multilingual-e5-large are worth testing. For teams willing to pay per query indefinitely and wanting top-of-benchmark quality, OpenAI’s text-embedding-3-large handles multilingual text well.

Do I need a different vector database for multilingual embeddings?

No. ChromaDB, Pinecone, Weaviate, pgvector, and Qdrant all store dense vectors without caring about the source language. The embedding model handles the language work before the vector ever reaches the database. Our Raj Brain swap didn’t touch ChromaDB or the HNSW index configuration at all.

How do Hinglish text embeddings actually work?

A well-trained multilingual embedding model has seen parallel or paraphrase pairs across many languages during training. The training objective pushes equivalent meanings, regardless of language or script, toward the same point in vector space. So mehnat in Roman script, मेहनत in Devanagari, and hard work in English all end up near each other. This is why script-mixing and code-switching — the two properties that break English-first models — are handled smoothly by properly multilingual models.

Can I fine-tune an English-first embedding model on Hinglish data instead?

You can. It’s usually more trouble than it’s worth for a project of this scale. Fine-tuning requires paired training data, a careful evaluation harness, and ongoing re-training as your corpus grows. A pre-trained multilingual model that already handles your language mix is faster to deploy and easier to maintain. Fine-tuning makes more sense when you have a domain-specific vocabulary — medical, legal, industry-specific jargon — that no off-the-shelf model captures well.

How many chunks before I need to worry about embedding dimension?

For fewer than 100,000 chunks, 384-dimensional embeddings are fine on almost any hardware. Between 100K and 10M chunks, the difference between 384 and 1024 starts to matter for both storage cost and query latency — plan on benchmarking both. Above 10M chunks, you’re in territory where proper index tuning matters more than model choice. Raj Brain’s 4,345 chunks sat comfortably at the low end.

What to take from this

If you’re building a RAG system on data that isn’t clean English, the default advice from RAG tutorials will steer you wrong. Not because the tutorials are bad — they’re written for the data they were written for. Your data has a different shape.

Before you pick an embedding model, describe your data. Before you index, test on a sample. Before you ship, run real user queries through your top shortlist. The cost of doing this upfront is a day. The cost of not doing it is two weeks and a painful conversation with a stakeholder who wonders why search doesn’t work.

Raj Brain works now. 109 episodes, 4,345 chunks, under 4-second end-to-end retrieval, and an embedding model that actually understands Hinglish. That’s the part I’m proud of. The part I’d change if I started over is the part where I trusted the benchmark before I looked at my data.

Building a multilingual RAG or search system on your own messy data? That’s what I do. If you’re stuck on embedding model choice, chunking strategy, or retrieval quality for code-mixed text, I’m happy to take a look. You can reach me at hiremuneeb@gmail.com or LinkedIn.

Comments